|

So, the test would show that my masterpiece is actually mediocre or worse. In a false positive situation, I would reject a null hypothesis that is true. “The number of times my article is read will be more than the number of similar articles I have posted” Had I swapped the null and alternative hypotheses, the errors would have been swapped, too. Now, this is a case where the worse situation is the false positive, however, a crucial fact is that I STATED the null hypothesis in a specific way. This is not the best outcome, I may have missed an opportunity but that is in no way as devastating as the false positive where I believe I have done something to improve my career but instead potentially ruined it. However, I am a driven person that learns from his ‘mistakes’ so instead try different techniques and potentially create even better writings. Of course, I will not attempt to write articles in this style any time soon. This would occur if, say, this article was a masterpiece of blog writing, but my test shows me that it is not even mediocre. What about the type II error – the false negative? This will no doubt will affect my career and self-esteem in a negative way. Yes, here my false positive has a bad outcome, I will inevitably think my article is better than it is and, from now on, write all my articles in the same style, ultimately hurting my blog traffic. My test showed that I performed above average, but in fact, I did not. I rejected a null hypothesis that was true. This article performed above average – Great! There’s my positive.If I reject the null hypothesis, this means one of two things. With this in mind, the null hypothesis I will choose is: “The number of times my article is read will be less or equal to the number of similar articles I have posted”. So, let’s say I want to see if this particular article is performing better than the average of the other articles I have posted. The proper scientific approach is to form a null hypothesis in a way that makes you try to reject it. That’s further justified by the fact that a frequently asked question at data science interviews is: ‘give examples when false negative is the bigger problem’. I read in many places that the answer to this question is: a false positive. Which error would you say is more serious?Ī false positive (type I error) – when you reject a true null hypothesis – or a false negative (type II error) – when you accept a false null hypothesis? We've also made a video on the topic - you can watch it below, or scroll down if you prefer reading.įalse Positive and False Negative: Which Error Is More Serious? If you would like to get an understanding of how to do that, we have made an explainer video on the subject here. Lucky them!ĭisclaimer: This article is not here to teach you how to distinguish between the two. Then hopefully, after reading it, you will be itching to tell your loved ones all about type I error and type II error. You know those teachers who frantically talk about a subject that nobody understands or wants to understand? Yeah, that’s me now! And its great, so I want to bring you to my level of excitement with this article by showing you how these two errors have practical implications in different and interesting real-life settings. Seeing their real-world applications and uses helped me go from an uninterested student to an enthusiastic teacher. The more I understood and encountered these errors the more they started to excite and interest me. Throughout the years, though, I began to have a change of heart.

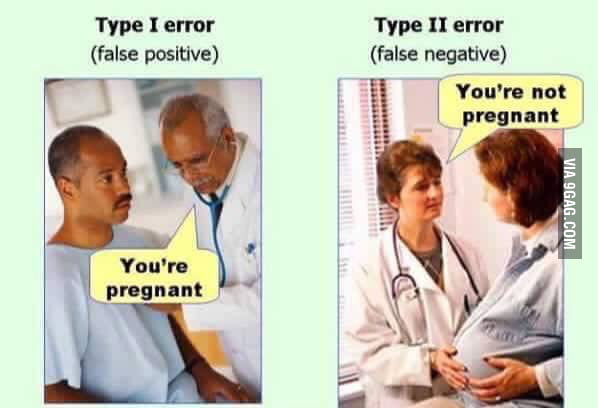

There are two errors that often rear their head when you are learning about hypothesis testing – false positive and false negative, technically referred to as type I error and type II error respectively.Īt first, I was not a huge fan of the concepts, I couldn’t fathom how they could be at all useful.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed